For more than a decade, cloud computing dominated enterprise infrastructure strategy. Businesses moved applications, storage, analytics, and AI workloads into centralized data centers because the cloud offered scalability and flexibility that traditional systems could not match.

In 2026, the conversation has changed. Companies are now realizing that sending every piece of data to distant servers creates new operational problems. AI tools require instant responses, smart devices generate enormous amounts of data, and industries relying on automation cannot tolerate delays caused by network latency.

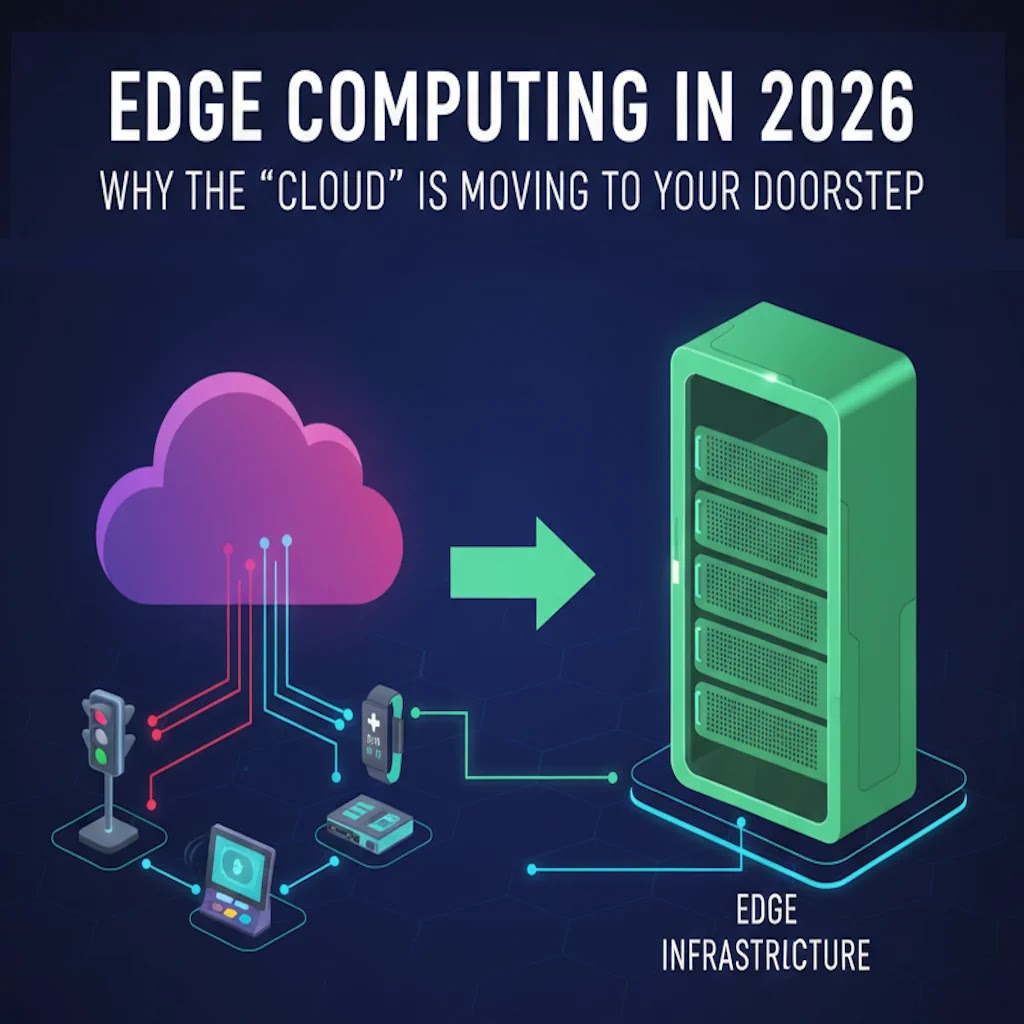

That is why edge computing is becoming one of the most important infrastructure trends of the year.

Instead of processing everything inside remote cloud environments, edge computing moves data processing closer to where information is created. This could mean local servers inside factories, AI chips inside vehicles, processing units inside retail stores, or intelligent gateways connected to industrial equipment.

After analyzing enterprise technology adoption trends across manufacturing, logistics, healthcare, and retail sectors, one pattern is becoming obvious. Businesses no longer see edge infrastructure as experimental technology. They see it as a practical solution for speed, cost control, operational resilience, and real time AI execution.

Edge computing also supports the rapid growth of modern technologies like sustainable blockchain infrastructure, AI automation platforms, and advanced quantum resistant security systems, all of which require faster and more distributed processing environments.

What You Will Learn in This Guide

- Why edge computing adoption is accelerating in 2026

- How low latency infrastructure improves AI performance

- Real business use cases across multiple industries

- How companies reduce bandwidth and cloud costs

- The biggest cybersecurity risks of distributed systems

- Best practices for successful edge deployment

- Who should invest in edge infrastructure and who should wait

For many organizations, the biggest shift is strategic. Instead of asking, “How much can we move to the cloud?”, companies are now asking, “Which workloads should stay local for maximum speed and efficiency?”

Why Low Latency is Becoming a Competitive Advantage

Latency refers to the time required for data to travel between systems and return with a response. In traditional cloud environments, information often travels across large geographic distances before processing happens.

For basic applications like email, backups, or document storage, this delay may not matter. For modern AI systems and automated operations, it matters significantly.

Consider a manufacturing robot detecting a safety issue on an assembly line. Waiting even a fraction of a second for cloud processing could stop production or create operational risks. The same applies to autonomous vehicles, financial trading systems, and AI powered healthcare monitoring.

Edge computing solves this problem by processing data closer to the device itself. In real deployments, organizations often reduce response times from hundreds of milliseconds to single digit milliseconds.

That improvement may sound technical, but its business impact is very practical.

- Faster automation decisions

- Reduced operational downtime

- Better customer experiences

- More stable AI systems

- Lower dependency on internet quality

In retail environments, edge powered checkout systems can process customer transactions locally even during unstable internet conditions. In logistics, edge sensors inside warehouses can instantly track inventory movement without relying entirely on centralized cloud analysis.

One trend becoming increasingly common in 2026 is “AI at the edge”, where machine learning models operate directly on local hardware instead of sending every request to cloud GPUs.

As explained in our guide on AI powered asset management, faster decision making systems often lead directly to measurable operational savings.

In industrial environments across India and Southeast Asia, businesses are increasingly using local edge servers to monitor machine vibration, temperature, and equipment health in real time. Instead of waiting for centralized analysis, maintenance teams receive alerts instantly, helping reduce costly breakdowns.

Real World Edge Computing Use Cases

One reason edge computing adoption is accelerating quickly is because its benefits are practical, measurable, and industry specific.

Businesses are no longer investing in edge infrastructure because it sounds innovative. They are adopting it because it solves real operational problems.

Smart Manufacturing

Factories generate enormous amounts of sensor data every second. Sending all of that information to remote cloud systems increases both bandwidth usage and response delays.

Edge gateways allow factories to process sensor data locally. Systems can instantly detect abnormal vibration patterns, overheating components, or production inefficiencies.

Manufacturers using predictive maintenance often experience:

- Reduced unplanned downtime

- Longer equipment lifespan

- Lower maintenance costs

- Improved production consistency

Retail and Smart Stores

Retailers are using edge AI for customer analytics, shelf monitoring, inventory tracking, automated checkout systems, and in store security.

One major advantage is bandwidth efficiency. Instead of streaming continuous video feeds to the cloud, local AI systems analyze footage directly inside the store and send only important events to centralized systems.

This reduces cloud storage costs while improving response speed.

Healthcare Systems

Healthcare organizations depend heavily on reliable real time monitoring. Edge computing helps hospitals process patient data locally, reducing delays for critical alerts.

In emergency care environments, even minor latency improvements can influence medical response times.

Hospitals are also increasingly using edge systems for:

- Remote patient monitoring

- Medical imaging analysis

- Connected medical devices

- AI assisted diagnostics

Financial Services

Financial institutions operate in highly competitive environments where milliseconds matter. Edge computing supports faster fraud detection, transaction validation, and algorithmic trading analysis.

Many financial companies also use localized infrastructure to improve compliance and reduce the risk of sensitive data exposure during transmission.

Smart Cities and Transportation

Traffic management systems, connected vehicles, surveillance networks, and public transportation analytics rely heavily on edge processing.

For example, traffic cameras equipped with edge AI can identify congestion patterns instantly without transferring massive video streams continuously to centralized servers.

“The future of infrastructure is not fully centralized or fully local. The strongest systems combine both intelligently.”

Infrastructure ROI and Cost Benefits

Many companies initially explore edge computing for performance reasons, but financial efficiency often becomes the long term motivation.

Cloud infrastructure is powerful, but continuously transmitting massive amounts of data creates recurring bandwidth and storage costs. This becomes especially expensive for AI video analytics, IoT networks, industrial monitoring, and high frequency sensor environments.

By processing data locally, businesses can dramatically reduce unnecessary cloud traffic.

During infrastructure analysis across enterprise deployments, one trend repeatedly appeared. Organizations with high volume real time workloads often achieve better long term operational efficiency through hybrid edge architectures.

Cloud vs Edge Infrastructure Comparison

| Feature | Centralized Cloud | Edge Infrastructure |

|---|---|---|

| Processing Speed | 100ms to 500ms | 1ms to 10ms |

| Bandwidth Usage | Very High | Lower Local Traffic |

| Internet Dependency | Continuous Connection Required | Partial Offline Capability |

| Operational Privacy | Higher Data Transit Exposure | More Localized Control |

| Scalability Model | Centralized Expansion | Distributed Expansion |

However, businesses should avoid assuming that edge computing automatically reduces costs in every scenario.

Initial deployment investments may include:

- Local servers and edge gateways

- AI capable hardware

- Distributed monitoring systems

- Cybersecurity infrastructure

- Specialized IT management

The strongest return on investment usually appears in environments involving:

- High frequency real time processing

- Continuous video analytics

- Industrial IoT systems

- Remote operations with unstable connectivity

- AI powered automation

Practical Cost Optimization Tip

One of the most effective strategies companies are using in 2026 is filtering data locally before sending important insights to the cloud. This approach significantly reduces unnecessary storage and transmission costs while still maintaining centralized analytics capabilities.

Security and Privacy Challenges

Although edge computing improves speed and operational flexibility, it also creates new cybersecurity responsibilities.

Traditional cloud environments are centralized and easier to monitor from a single location. Edge computing distributes workloads across many devices, gateways, and local systems. This increases the number of potential attack surfaces.

For example, poorly secured edge devices inside factories, retail stores, or telecom infrastructure can become entry points for attackers if firmware updates and encryption standards are neglected.

Businesses expanding edge infrastructure in 2026 are increasingly combining it with quantum resistant encryption systems and zero trust security models to strengthen distributed protection.

Key Security Best Practices

- Encrypt all communications between devices and servers

- Use zero trust authentication systems

- Deploy automatic firmware update policies

- Monitor distributed infrastructure continuously

- Restrict physical access to local hardware

- Use AI powered anomaly detection tools

- Segment networks to reduce lateral attack movement

One mistake many businesses make is focusing only on performance improvements while underestimating operational security management. Successful edge deployments require both speed and strong governance.

Who Should Adopt Edge Computing in 2026

Not every organization needs a large scale edge deployment immediately. The best approach depends on workload intensity, operational requirements, and digital maturity.

Best Fit Businesses

- Manufacturing companies using industrial IoT devices

- Retail chains operating smart analytics systems

- Healthcare providers handling real time monitoring

- Financial firms processing high speed transactions

- Logistics companies managing connected fleets

- Media companies processing live video streams

- Telecom providers supporting 5G services

Businesses That Should Evaluate Carefully

- Very small organizations with limited digital workloads

- Businesses without IT management capabilities

- Companies operating mostly static applications

- Organizations lacking cybersecurity resources

- Businesses without clear operational use cases

Hybrid Infrastructure is Becoming the Standard

For most enterprises, the future is not “edge only” or “cloud only.” The strongest infrastructure strategies combine centralized cloud scalability with localized edge intelligence.

Cloud systems remain ideal for large scale storage, long term analytics, and centralized management. Edge systems handle speed sensitive processing close to devices and users.

“In 2026, competitive infrastructure is no longer defined by where servers exist. It is defined by where decisions happen fastest.”

Best Practices for Edge Computing Deployment

Organizations planning edge adoption should avoid treating it as a simple hardware upgrade. Successful deployments require careful planning across networking, AI integration, cybersecurity, and operational management.

Start with One High Value Use Case

Businesses often achieve better results by starting with a focused deployment instead of attempting enterprise wide transformation immediately.

Examples include predictive maintenance, smart surveillance, or local AI analytics.

Measure Latency Before and After Deployment

Many companies deploy edge systems without clearly measuring operational improvements. Tracking latency reduction, bandwidth savings, and downtime improvements helps justify future investment.

Keep Cloud and Edge Systems Integrated

Edge computing works best when integrated with centralized cloud infrastructure. Local systems should still synchronize important analytics, backups, and monitoring data with cloud platforms.

Prepare for Device Management at Scale

Managing hundreds or thousands of distributed devices requires automation. Companies should invest in centralized monitoring dashboards, remote update systems, and automated diagnostics.

The Future of Edge Computing

Edge computing is rapidly reshaping how digital infrastructure operates. Artificial intelligence, connected devices, autonomous systems, and industrial automation all depend increasingly on localized decision making.

Instead of replacing cloud computing entirely, edge infrastructure is transforming the cloud into a more distributed ecosystem where processing happens at multiple levels simultaneously.

Several trends are expected to accelerate adoption through 2026 and beyond:

- Expansion of 5G networks

- Growth of AI powered devices

- Increasing IoT sensor deployment

- Higher demand for real time analytics

- Rising cloud bandwidth costs

- Stronger data privacy requirements

Businesses adapting early are likely to benefit from lower latency, improved automation, stronger privacy controls, and more efficient AI deployment strategies.

Edge computing is no longer just an infrastructure trend. It is becoming a foundational layer of modern digital operations.

Final Thoughts

The biggest misconception about edge computing is that it exists to replace the cloud. In reality, the future belongs to hybrid infrastructure models that combine centralized scalability with localized intelligence.

Organizations investing in AI, automation, IoT systems, and real time analytics increasingly require infrastructure capable of making decisions instantly and reliably.

For businesses handling high frequency data or latency sensitive operations, edge computing is quickly becoming less of an optional upgrade and more of a competitive requirement.

Companies that begin planning early, secure their infrastructure properly, and focus on practical use cases will likely gain significant operational advantages as distributed computing continues expanding through 2026.

Frequently Asked Questions

What is edge computing?

Edge computing is a distributed computing model where data processing happens closer to the source of the data instead of relying entirely on distant cloud servers.

Why is edge computing important in 2026?

Modern AI systems, industrial automation, IoT devices, and real time analytics require faster processing speeds and lower latency than centralized cloud systems can always provide efficiently.

Does edge computing replace cloud computing?

No. Most organizations use a hybrid model where cloud infrastructure handles centralized storage and analytics while edge systems process latency sensitive workloads locally.

What industries benefit most from edge computing?

Manufacturing, healthcare, finance, transportation, logistics, telecom, and retail industries are among the sectors seeing the strongest benefits from edge infrastructure.

Is edge computing more secure than cloud computing?

Edge computing can improve privacy by keeping sensitive data local, but it also introduces new cybersecurity challenges because infrastructure becomes more distributed.

What is the difference between edge computing and cloud computing?

Cloud computing processes workloads inside centralized remote data centers, while edge computing processes workloads closer to devices and users for faster response times.

Can small businesses use edge computing?

Yes, but the value depends on workload requirements. Small businesses using smart surveillance, IoT systems, or AI analytics may benefit from lightweight edge deployments, while basic office operations may not require it yet.